Infinite ideas, finite brain. Nobody warned us this would be the problem.

I downloaded four AI tools in one week last February. Not because I needed four, because someone in a podcast mentioned one, a colleague sent me another, a LinkedIn post made a third look irresponsible to ignore, and the fourth? I genuinely can’t remember how the fourth one happened.

I had tabs open for all of them. Tutorial videos queued. A Clawdbot half-configured for a workflow I hadn’t finished designing yet. A voice note to myself that began with the words “okay so what if we” and ended forty seconds later with nothing resolved.

I got nothing on my list done that week.

This isn’t just a productivity problem. It’s a new kind of cognitive event that didn’t have a name until recently because it didn’t exist until recently. Researchers are starting to catch up. The rest of us have already been living it.

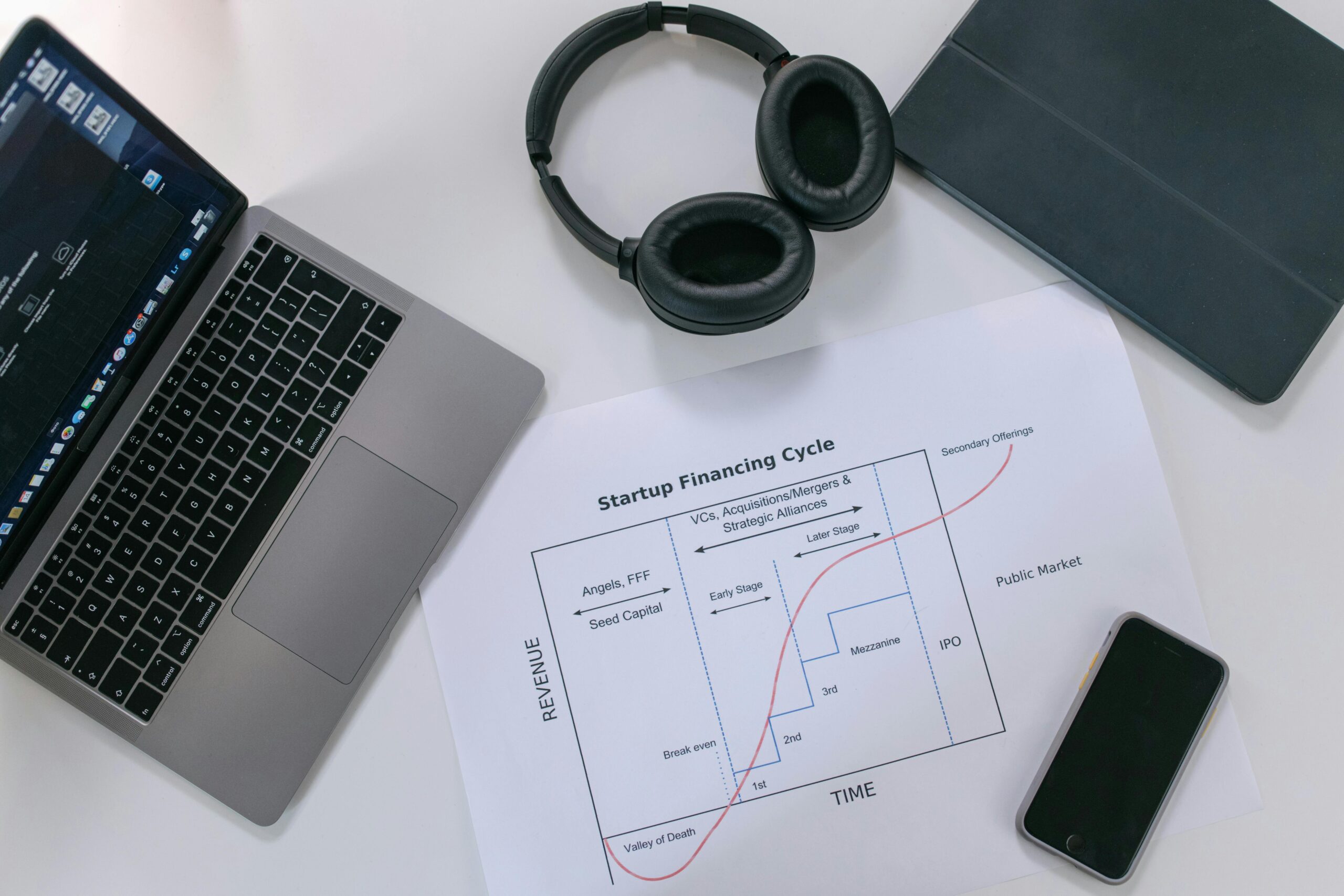

The Deskilling Effect of AI

In early 2026, Anthropic’s own internal research found that developers using AI coding tools experienced measurable declines in debugging ability and code comprehension. The tool made them faster. It also made them weaker. Josh Nosta, writing in Psychology Today, called it the AI rebound effect, the phenomenon where an AI-driven productivity spike masks an underlying erosion of skill. As he put it, when automation handles the details, situational awareness dulls. The mental models that once guided expert judgment shrink because the system is doing what the person once did themselves.

The skill doesn’t simply return when the tool goes away. It comes back lower.

That’s the deskilling story. But there’s a second story running parallel to it, and it gets far less coverage. Not what AI takes from your existing skills. What AI does to your capacity to focus on building anything at all. This one isn’t about atrophy. It’s about volume and it’s where most of us actually live.

In this new era of AI tools, it’s like if someone handed every ideas person on the planet a key to an infinite candy store of new ideas.

Open 24 hours. Every flavor imaginable. No limit. No bill.

To the other ideas people, the founders, the operators, the builders, the early adopters who have been waiting their whole careers for something like this, we walked straight in and started grabbing.

Some of us are still in there. Slightly glazed. Surrounded by empty wrappers. Wondering why we feel overstimulated and haven’t done anything real in three weeks?

That’s the AI candy crash. I’m officially coining it, because apparently someone had to.

The bottleneck used to be access to ideas. Now the bottleneck is you.

The Bottleneck Isn’t Ideas, It’s You

There’s a specific version of this that early adopters experience, and it’s worth naming precisely because it’s different from ordinary distraction. I’m going to call it AIFOMO, and before you roll your eyes at the acronym, hear me out, because you’ve already had it.

AIFOMO isn’t fear of missing out on social plans. It’s the specific anxiety of hearing a colleague describe how they’re using a tool you haven’t tried yet and immediately feeling behind. The podcast rabbit hole that starts with genuine curiosity and ends with seventeen open tabs and a mild identity crisis about whether you’re actually keeping up. It’s the moment you finally master a workflow, build it into your system, feel genuinely good about it. Then something new launches and the cycle resets completely.

The early adopter paradox in an AI era: the instinct that made you valuable is the same instinct that’s now working against you.

You can’t chase everything and the cost of trying isn’t just lost time. It’s lost depth.

The researchers framing this as a cognitive load problem aren’t wrong, but they’re describing it too clinically. This isn’t abstract overload. It’s something more specific: you’re making more decisions about ideas per hour than the human brain was calibrated to handle, and most of those decisions involve genuinely good options. The difficulty isn’t filtering noise. It’s filtering signal.

Bad ideas are easy to ignore. It’s the good ones that break your focus.

A half-built workflow. A voice note you meant to develop. A Clawdbot configured for something you haven’t had time to use yet. These aren’t failures. They’re evidence of a brain that recognized real potential and had no system for deciding what to act on and what to set down.

The candy crash isn’t about weak focus. It’s about strong curiosity meeting infinite supply with no circuit breaker.

How to Navigate Infinite AI Ideas

Before you open an AI session, know what quarter you’re in. Not the calendar quarter. Your strategic quarter. What’s the one outcome this period is organized around? Write it somewhere visible. It takes twenty seconds. It changes everything about which ideas are actionable and which are just interesting.

Build a fate file, not a folder. There’s a difference. A folder is where ideas go to die quietly. A fate file is a single document where you drop ideas with a one-line note on why they’re worth returning to and when. Next quarter. Next year. Never but interesting. The idea gets captured, the decision gets made, and your brain stops holding it in the background.

When an AI session generates ten directions and you want to chase all of them, pick one and assign the rest a date. Not a someday. A date. The calendar is the only system your curiosity will actually respect.

And when a colleague sends you something that makes you feel immediately behind, and they will because they’re also in the candy store, give yourself the rule that you’ll look at it Friday. Not now. The competitive urgency is almost never real. The distraction cost always is.

The most effective people I’ve watched navigate this moment share one visible trait. They walked into the candy store with a list, bought what they came for, and left before the crash. They’re not less curious. They’re more deliberate about where curiosity is allowed to run.

AIFOMO is real.

The early adopter instinct is real. The competitive pressure to stay current is real and so is the ceiling your brain hits when you try to process all of it at once.

The researchers warned us that over-reliance on AI could dull the skills it was meant to amplify. What they didn’t fully map is the adjacent risk, that the sheer generativity of these tools would create a new kind of cognitive tax on the people most drawn to using them.

We’re not in a productivity crisis. We’re in a prioritization crisis wearing productivity’s clothes.

There’s such a thing as too much of a good thing. Building the guardrails to manage that isn’t a retreat from ambition.

It’s what ambition looks like when it’s running on a sustainable engine.

Read the Comments +